Artificial intelligence has moved from testing rooms into real business workflows.

Companies are now using AI to answer customer questions, improve product content, forecast demand, review campaign performance, analyze ecommerce data, and support faster decision-making.

The question has changed.

Businesses are no longer asking, “Should we use AI?”

They are asking, “How do we use AI safely inside real operations?”

That is where many AI projects face their first real test.

A strong AI model can read data, find patterns, generate content, and support automation. But a strong model alone does not make an AI system safe, reliable, or ready for business use.

In a live business environment, AI needs rules. It needs review systems. It needs data controls. It needs accountability. It needs clear limits on what it can and cannot do.

That is why safe AI deployment needs AI guardrails.

For enterprises, brands, retailers, and ecommerce teams building custom AI systems, the real advantage will not come from using the biggest model. It will come from using AI that is secure, controlled, measurable, and aligned with business goals.

Table of Contents

What Are AI Guardrails?

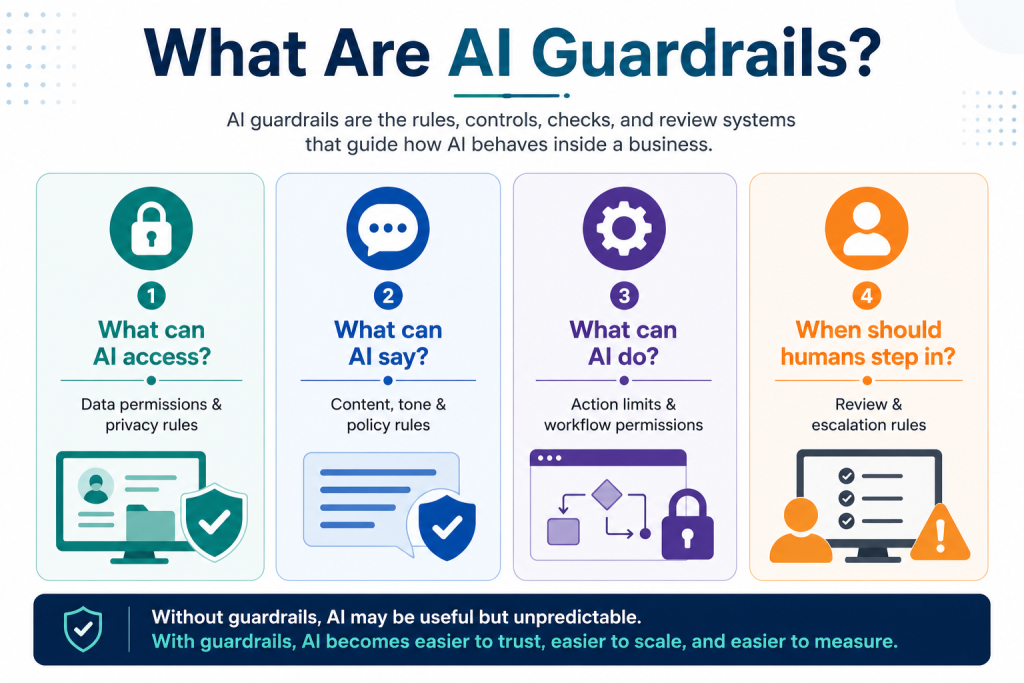

AI guardrails are the rules, controls, checks, and review systems that guide how AI behaves inside a business.

They help ensure that AI outputs are accurate, secure, policy-compliant, and suitable for real business use.

In simple terms, AI guardrails answer four important questions:

| Guardrail Question | What It Means |

| What can AI access? | Data access and privacy rules |

| What can AI say? | Content, tone, and policy rules |

| What can AI do? | Action limits and workflow permissions |

| When should a human step in? | Review, approval, and escalation rules |

Without guardrails, AI may be useful but unpredictable.

With guardrails, AI becomes easier to trust, easier to scale, and easier to measure.

What Is Safe AI Deployment?

Safe AI deployment means using AI in live business workflows with the right controls for data, accuracy, compliance, human review, and accountability.

It is not just about whether the model can perform a task. It is about whether the system can perform that task responsibly.

For example:

A model may be able to answer a customer query. But should it answer every query without review?

A model may be able to suggest pricing changes. But should it push those changes live automatically?

A model may be able to create product content. But does that content follow brand rules, marketplace policies, and category requirements?

These questions show the difference between AI capability and AI readiness.

A good model may work well in a demo. A safe AI system works responsibly when connected to real data, real customers, and real business decisions.

Why Model Performance Is Not Enough ?

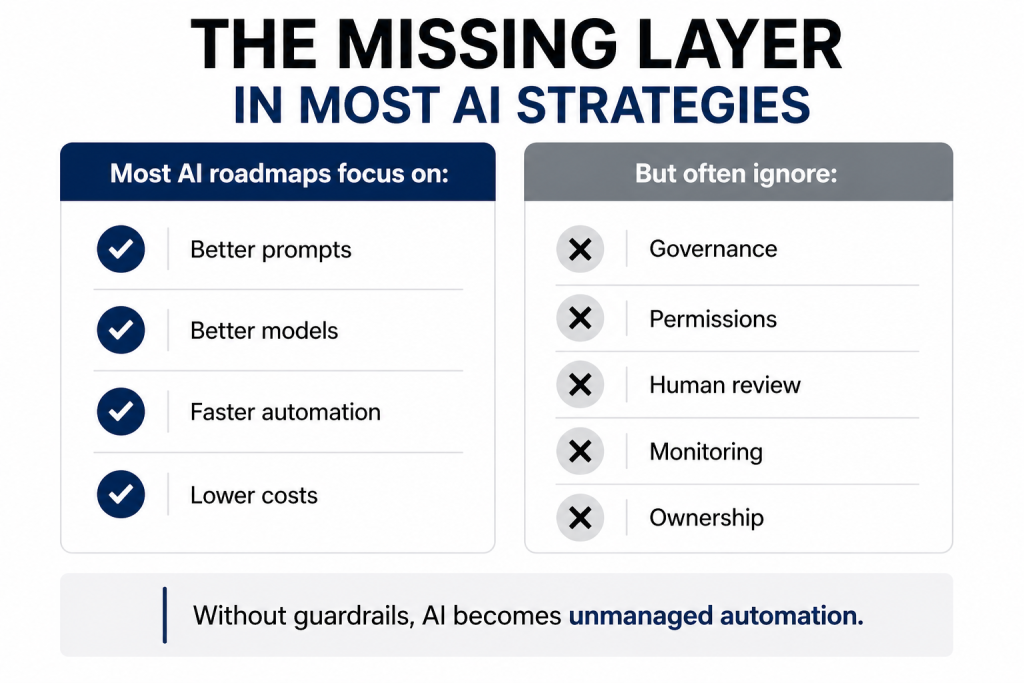

Many businesses assume that successful AI deployment starts and ends with choosing a better model.

That is only one part of the equation.

A model’s performance shows how well it can understand inputs, generate responses, identify patterns, or make predictions. But real deployment is more complex.

Once AI is connected to business workflows, it may interact with:

| Business Area | AI Use Case | Risk Without Guardrails |

| Customer support | Answering product or service questions | Wrong answers, poor customer experience |

| Ecommerce content | Creating product descriptions, titles, bullets, and FAQs | Policy violations or inaccurate claims |

| Pricing | Suggesting price changes | Revenue loss or margin impact |

| Inventory | Forecasting demand | Stockouts or excess inventory |

| Marketing | Reviewing campaign data and content | Off-brand messaging or weak decisions |

| Analytics | Giving business recommendations | Poor decisions from incorrect outputs |

In a test environment, an AI mistake may be easy to fix. In live operations, the same mistake can affect revenue, customer trust, compliance, and brand reputation.

This is why AI deployment needs more than technical strength. It needs business judgment built into the system.

Why Custom AI Needs Stronger Guardrails ?

Custom AI systems are built for specific business needs. They often work with internal data, product catalogs, pricing history, campaign data, customer records, or operational workflows.

Examples of custom AI include:

| Custom AI Use Case | What It Does |

| Ecommerce operations assistant | Helps teams track catalog, pricing, availability, and marketplace issues |

| Product content automation | Creates or improves titles, bullet points, descriptions, and FAQs |

| Pricing recommendation system | Suggests pricing actions based on margin, demand, and competition |

| Customer service chatbot | Answers queries using brand policies and support data |

| Campaign analysis tool | Reviews ad performance and suggests next actions |

| Demand forecasting system | Predicts inventory needs based on sales and market signals |

These systems are valuable because they work close to real business decisions.

But that also makes them riskier.

A general AI tool giving a weak answer may waste a few minutes. A custom AI system connected to pricing, inventory, compliance, customer communication, or marketplace content can create larger business problems.

That is why custom AI guardrails should be planned before the system goes live, not added later after an issue appears.

Key Guardrails Every Business Needs

1. Data Privacy and Access Controls

AI systems often rely on sensitive data such as customer information, pricing history, contracts, or operational metrics.

Without strict access controls, businesses risk exposing confidential information internally or externally.

Safe deployment requires:

- Role-based access permissions

- Data masking for sensitive records

- Secure integrations

- Controlled data retention policies

- Compliance with privacy regulations

The more valuable the data, the stronger the controls must be.

2. Human-in-the-Loop Oversight

Not every decision should be fully automated.

For high-impact functions such as pricing changes, legal communication, campaign approvals, or financial recommendations, human review remains essential.

A practical AI system knows when to escalate.

Guardrails should define:

- Which outputs need approval

- Which actions can be automated

- Confidence thresholds for intervention

- Escalation workflows for anomalies

AI should enhance human judgment—not replace it blindly.

3. Accuracy Monitoring

Models can drift over time. Customer behavior changes. Market trends shift. Inputs evolve.

An AI model that performed well six months ago may underperform today.

Continuous monitoring helps track:

- Output quality

- Prediction accuracy

- Error frequency

- Changing user behavior

- Broken integrations

Deployment is not a one-time event. It is an ongoing performance responsibility.

4. Bias and Fairness Controls

If AI is trained on incomplete or skewed historical data, it can repeat unfair patterns.

For example:

- Recommending certain products disproportionately

- Prioritizing certain customer segments unfairly

- Creating biased hiring or screening outputs

- Producing culturally insensitive content

Responsible businesses need regular audits, testing scenarios, and fairness checks built into the system.

5. Brand and Policy Alignment

Custom AI should not operate separately from the business identity.

A brand-safe AI system should understand:

- Approved tone of voice

- Regulatory claims limits

- Category restrictions

- Escalation language

- Service policies

- Internal standards

This is especially critical in ecommerce, healthcare, finance, and consumer goods where messaging accuracy matters.

The Hidden Cost of Ignoring Guardrails

Many businesses rush to launch AI pilots because competitors are doing the same. But speed without governance often creates expensive setbacks.

Common consequences include:

- Incorrect recommendations damaging trust

- Hallucinated responses confusing customers

- Sensitive data leakage

- Non-compliant communication

- Operational disruption from automation errors

- Internal resistance due to lack of transparency

In many cases, AI failure is not caused by the model itself. It is caused by poor deployment planning.

Safe AI Deployment Checklist for Enterprises

Before deploying a custom AI system, businesses should ask these questions.

| Area | Question |

| Business fit | What problem is this AI system solving? |

| Users | Who will use the system? |

| Data | What data will the AI access? |

| Privacy | Is sensitive data protected? |

| Access | Are permissions role-based? |

| Accuracy | How will outputs be checked? |

| Human review | Which outputs need approval? |

| Compliance | Are policy and legal risks covered? |

| Monitoring | How often will performance be reviewed? |

| Ownership | Who is accountable for the system? |

| Escalation | What happens when AI is unsure or wrong? |

| Measurement | What business outcome will be tracked? |

This checklist helps businesses move from AI testing to AI maturity.

What Businesses Should Ask Before Deploying AI ?

Before launching any custom AI solution, organizations should evaluate:

- What business problem is being solved?

- What risks come with automation?

- What data is involved?

- What approvals are required?

- How will outputs be measured?

- Who owns accountability?

- What happens when the AI is wrong?

These questions create maturity. Without them, AI becomes a liability.

Why Industry Context Matters in AI Governance ?

AI governance should not be generic.

An ecommerce brand may need controls around product claims, marketplace policies, pricing, inventory, and customer reviews.

A retailer may need governance for demand forecasting, supplier data, store-level data, stock planning, and promotions.

A marketplace may need moderation rules, fraud checks, catalog quality controls, and customer support consistency.

A finance company may need strict controls around sensitive data, regulated language, and customer communication.

This is why safe AI deployment should combine technology knowledge with industry knowledge.

A safe AI system is not just technically correct. It understands the business environment where it operates.

How Paxcom Helps Businesses Build Safe Custom AI

At Paxcom, we believe AI should support measurable business growth without putting trust, data, or brand integrity at risk.

That means building AI systems that are not only capable, but also ready for real business use.

Our approach focuses on:

| Paxcom Approach | What It Means for Businesses |

| Ecommerce and retail expertise | AI systems are built with category and channel context |

| Custom AI solutions | Use cases are aligned to specific business goals |

| Governance-first deployment | Safety checks are planned from the start |

| Human review workflows | Teams stay in control of high-impact decisions |

| Secure data handling | Business and customer data are protected |

| Performance tracking | AI outputs are reviewed and improved over time |

| Workflow integration | AI fits into existing business processes |

Whether the goal is improving digital shelf visibility, automating product content, analyzing marketplace performance, or supporting faster decision-making, safe AI deployment is central to long-term success.

Final Thoughts

The future of AI will not be shaped only by businesses that use the most advanced models. It will be shaped by businesses that deploy AI with clarity, control, and responsibility.

Custom AI needs more than intelligence. It needs guardrails that protect data, reduce risk, support accuracy, preserve brand trust, and keep humans involved where judgment matters.

Because in real business environments, success is not only about what AI can do. It is about what AI should do, where it should stop, and how safely it can support better outcomes.

At Paxcom, we help businesses move from AI testing to safe, practical, and business-ready AI systems built for performance, governance, and long-term value.