AI accuracy is now a serious business question for modern brands.

It affects product content, campaign decisions, customer support, pricing, forecasting, marketplace visibility, and AI-led discovery. A model may predict demand correctly, write clean product copy, or recommend a campaign change in seconds.

That still leaves one important question: can the system be trusted when it works inside a live business environment?

Brands need AI governance for brands: the rules, reviews, guardrails, access controls, and audit trails that decide how AI uses data, generates outputs, makes recommendations, and takes action.

Without responsible AI governance, even a highly accurate model can create risk. It may produce hallucinated claims, use outdated data, expose sensitive information, waste media spend, recommend poor pricing decisions, or create actions that nobody can explain later.

For modern brands, AI accuracy is useful only when it sits inside a clear AI risk management system.

Table of Contents

What AI accuracy means

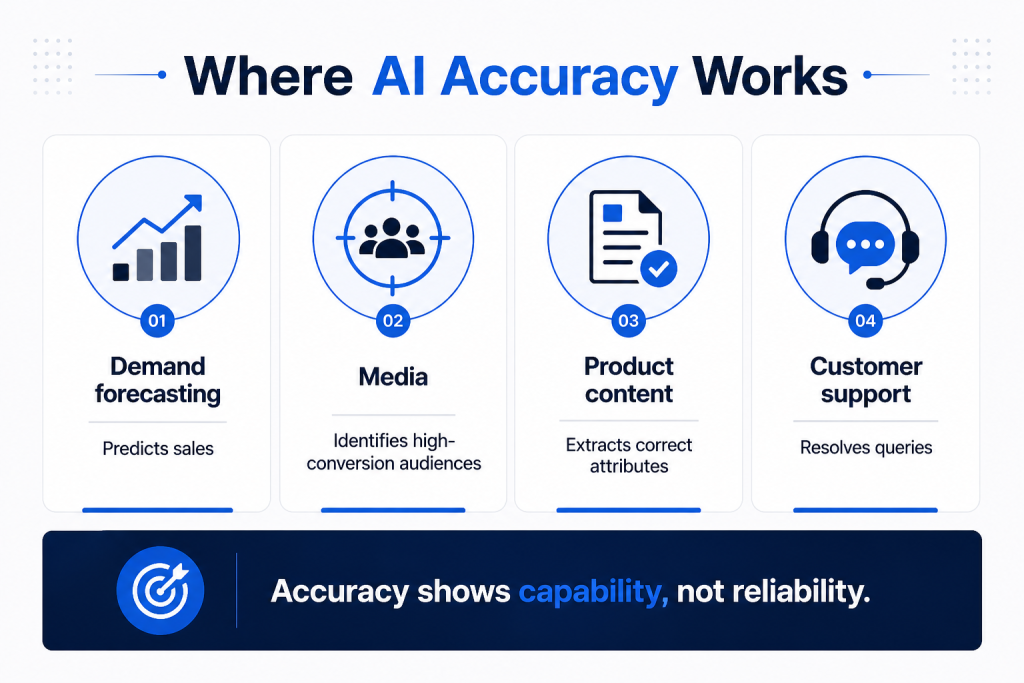

AI accuracy usually means the system’s output matches the expected answer.

In a demand forecast, accuracy may mean the model predicts sales close to actual sales.

In media, it may mean the model identifies the audience segments most likely to click or convert.

In product content, it may mean the tool extracts the right product attributes from a catalog file.

In customer support, it may mean the answer solves the customer’s query without escalation.

These are useful measures. They tell teams whether the AI can perform a task.

They do not tell teams whether the AI should be allowed to act on its own.

A model can be accurate on average and still fail in specific cases that matter. A 95% accurate content tool may still generate 5 incorrect claims in every 100 outputs. If those claims relate to ingredients, warranties, dosage, sustainability, financial terms, or safety, the risk is serious.

For brands, the cost of one wrong output can be much higher than the benefit of many correct ones.

What AI governance For Brands means

AI governance is the system of rules that controls how AI is used inside a business.

For brands, governance usually includes:

- Approved data sources

- Role-based access

- Human review for sensitive use cases

- Brand voice and claim rules

- Marketplace compliance checks

- Budget and pricing limits

- Security and privacy controls

- Audit logs

- Output validation

- Escalation rules

- Ongoing monitoring

Good governance gives teams a clear answer to basic questions.

- Who approved this AI use case?

- Which data did the model use?

- Can the output be checked?

- Who is responsible if the output is wrong?

- Can the system take action automatically?

- When does a human need to review the decision?

Where is the decision recorded?

These questions are becoming more urgent because AI adoption is spreading faster than governance in many companies. IBM defines shadow AI as the use of AI tools by employees without formal approval or IT oversight, which creates risk around data, security, and contro

AI governance for brands

An AI governance framework gives brands a clear way to control how AI is used across teams, tools, and workflows.

- What data can AI use?

AI should pull from approved data sources such as product catalogs, campaign dashboards, pricing files, stock reports, customer policies, and verified internal documents. - Who can use AI tools?

Access should depend on role, team, use case, and data sensitivity. A content user, media manager, analyst, and admin should not have the same level of control. - Which outputs need human review?

Product claims, pricing decisions, customer-facing communication, compliance-heavy content, legal language, and automated actions should have review rules. - What actions can AI take?

Some AI systems should only suggest. Others may draft, classify, summarize, or trigger workflows. High-impact actions need approval before they go live. - Which guardrails are required?

Brands need AI guardrails for budgets, pricing thresholds, claims, banned terms, marketplace rules, customer data, and escalation triggers. - How are decisions tracked?

Every important AI output should have an audit record. Teams should know what data was used, what the AI recommended, who approved it, and what changed after the action. - How is performance monitored?

AI governance should track error rates, hallucination incidents, manual overrides, content rejections, budget anomalies, pricing exceptions, and customer complaints linked to AI outputs.

A good enterprise AI governance system makes AI easier to use safely. It gives teams speed, but keeps decisions inside business-approved limits.

Why accuracy alone creates a false sense of confidence

AI tools often perform well in demos.

A dashboard shows better predictions. A content tool writes in seconds. A media system improves click-through rate. A chatbot answers 50 questions without asking a human.

The problem starts when those outputs enter real business workflows.

Brands operate in changing environments. Competitor prices move daily. Retail media costs shift by campaign and keyword. Marketplace algorithms change. Stock levels vary by pin code. Product listings differ across platforms. Reviews change customer perception. Seasonal demand changes quickly.

An AI model trained on old patterns may make weak decisions when the market changes.

Example: a demand forecast may look accurate for the last 6 months. Then a competitor launches a discount campaign, a key SKU goes out of stock in major cities, and a marketplace changes search placement. The model still produces a forecast, but the forecast no longer reflects current conditions.

Example: an ad tool may increase traffic by pushing more budget to a campaign. If it ignores profitability, stock availability, or repeat purchase quality, the brand may pay for clicks that do not improve business results.

Example: a content engine may create product copy that sounds polished. If it adds an unsupported claim, the listing may be flagged, rejected, or worse, published with incorrect information.

Accuracy needs context. Governance provides that context.

Risk 1: AI hallucinations can damage trust

Generative AI can produce false or misleading information. This is often called hallucination.

A hallucination can look harmless when someone is testing a tool internally. It becomes risky when the output reaches customers, partners, marketplaces, regulators, or sales teams.

For brands, hallucination risk can appear in several places:

- Product descriptions with unsupported benefits

- Customer support replies with incorrect warranty terms

- Chatbot answers about delivery, refunds, or availability

- AI-generated FAQs with outdated policy information

- Sales decks with wrong claims

- Product comparison pages with incorrect competitor details

- AI search results that summarize the brand incorrectly

Regulators are already paying attention. In April 2026, Italy’s antitrust authority closed probes into AI firms after they committed to giving users clearer warnings about hallucination risks and possible inaccuracies.

This matters for brands because AI-generated misinformation can move quickly. A wrong support answer can create customer anger. A wrong product claim can affect compliance. A wrong comparison can damage partner relationships.

Governance reduces this risk by requiring source checks, claim libraries, human review, and clear rules for what AI can say.

Risk 2: Shadow AI exposes brand and customer data

Shadow AI happens when employees use AI tools without company approval or oversight.

It may start with simple work. Someone pastes a customer complaint into an AI tool to rewrite a reply. Someone uploads sales data to summarize performance. Someone asks a public AI tool to improve a pricing proposal. Someone gives campaign data to a browser extension.

The employee may be trying to save time. The business still carries the risk.

Shadow AI can expose:

- Customer names, emails, and phone numbers

- Sales and revenue data

- Product launch plans

- Pricing strategy

- Marketplace performance reports

- Internal documents

- Legal or compliance material

- Client data

A 2026 report covered by TechRadar found that more than 70% of employees were using AI tools weekly, with up to one-third using them outside IT oversight. The same report said 61% of IT leaders saw increased AI-related threats, while only 31% felt confident managing those risks.

For brands, this is a governance issue. Employees will use AI if official systems feel slow, blocked, or unclear. Companies need approved tools, clear policies, and safe workflows so teams do not move sensitive work into unapproved tools.

Risk 3: AI can waste media spend faster than humans

Brands now use AI across Amazon Ads, Flipkart Ads, Google Ads, Meta, quick commerce ads, retail media, and marketplace campaigns.

AI can adjust bids, shift budgets, test creatives, cluster audiences, and suggest keywords. That speed is useful when the rules are clear.

It becomes expensive when the rules are missing.

An AI media system may:

- Spend more on high-click, low-conversion keywords

- Push budget to SKUs with low stock

- Prioritize revenue while ignoring margin

- Keep spending during poor dayparts

- Miss marketplace fees or promo costs

- Treat new customer growth and discount-led repeat orders as equal

- Scale campaigns before content quality is fixed

A human may make a bad media decision once. Automation can repeat that decision thousands of times before the team notices.

Governance protects media spend through budget caps, profitability checks, stock-aware bidding, keyword rules, campaign approval workflows, and alerts.

For example, if a SKU is out of stock in 40% of priority pin codes, AI should not keep raising ad spend for that SKU in those regions. If a campaign drives clicks but weak sales, the system should flag it before the budget burns.

Risk 4: Pricing decisions can hurt margins and brand value

Dynamic pricing is one of the most sensitive AI use cases for brands.

A pricing model may recommend a discount to win more share. That recommendation may look accurate if the system is trying to increase sales volume. The business impact changes if the discount reduces margin, creates channel conflict, or trains customers to wait for lower prices.

Pricing decisions affect:

- Gross margin

- Retailer relationships

- Marketplace positioning

- Consumer perception

- Promo planning

- Sales team commitments

- Distributor confidence

A brand may want to protect a minimum margin. It may want to avoid undercutting a key retail partner. It may want to run discounts only during planned promotional windows. It may want to prevent certain SKUs from entering price wars.

AI will not know these rules unless the system has them.

Governed pricing systems set limits before price changes go live. They include margin floors, approval triggers, channel-specific rules, promo calendars, competitor thresholds, and audit logs.

The aim is simple: AI can recommend price changes, but the brand decides the boundaries.

Risk 5: Bad data creates bad decisions

AI depends on data quality.

If the input data is wrong, stale, incomplete, duplicated, or inconsistent, the output becomes unreliable.

For brands, this happens often because commerce data is spread across many sources:

- Marketplace dashboards

- Retail media platforms

- Quick commerce portals

- D2C websites

- ERP systems

- CRM tools

- Inventory files

- Product catalogs

- Agency reports

- Distributor data

- Customer reviews

- Search ranking trackers

A product may appear available in one system and out of stock in another. A campaign may show strong ROAS, but the sales data may exclude returns. A product title may differ across channels. Review data may be outdated. Category mapping may be inconsistent.

AI can read all of this and still produce the wrong recommendation if the data is not checked first.

Governance adds data rules:

- Which source is trusted for each metric?

- How fresh the data needs to be?

- How duplicate SKUs are handled?

- How missing values are flagged?

- How marketplace data is matched to internal product codes?

- How outputs change when confidence is low?

McKinsey’s 2025 global AI survey found that AI high performers are more likely to define processes for when model outputs need human validation to ensure accuracy.

That is a useful lesson for brands. Human review is not a weakness in AI adoption. It is a control for high-impact decisions.

Risk 6: AI content can break brand and marketplace rules

AI content governance is now a serious need for brands.

Marketing teams use AI to create titles, bullet points, product descriptions, A+ content, social posts, email copy, ad variations, FAQs, and chatbot responses.

The risk is not only poor writing. The risk is uncontrolled claims.

AI may create content that:

- Uses claims the brand cannot prove

- Mentions benefits that are not approved

- Breaks marketplace character limits

- Uses restricted words

- Adds medical or financial claims

- Changes product meaning

- Creates inconsistent messaging across channels

- Uses a tone that does not match the brand

- Misses required disclaimers

- Reuses competitor language too closely

For a fashion brand, this may create inconsistency. For an FMCG, personal care, pharma, baby care, finance, insurance, or health brand, it can create compliance risk.

AI content governance should include approved claim banks, banned terms, marketplace rules, product attribute checks, legal review for sensitive categories, and version history.

This is especially important for AI-led discovery. AI search engines and shopping assistants often read product pages, FAQs, reviews, and structured data to answer customer questions. If brand content is inconsistent or inaccurate, AI systems may summarize the brand incorrectly.

Risk 7: AI agents can take action before teams notice

AI agents are different from basic AI assistants.

A basic assistant may answer a question. An AI agent may complete a task: update a file, send an email, trigger a workflow, change a campaign, create a ticket, or call an API.

This makes governance more urgent.

Deloitte predicted that 25% of enterprises using generative AI would deploy AI agents in 2025, rising to 50% by 2027.

For brands, agentic AI may soon support tasks such as:

- Updating product listings

- Sending customer replies

- Changing campaign budgets

- Creating marketplace reports

- Triggering stock alerts

- Recommending replenishment

- Editing product content

- Creating sales summaries

- Routing customer complaints

- Sending partner updates

Each action needs clear permissions.

An AI agent should not have the same access for every task. A content agent may suggest PDP changes but should not publish them without review. A media agent may recommend budget shifts but should not cross approved spend limits. A support agent may answer common queries but should escalate complaints, refunds, legal issues, and safety concerns.

Agent governance should define who can create agents, what tools they can access, what data they can read, what actions they can take, and how their actions are logged.

Risk 8: Lack of audit trails creates accountability gaps

When AI influences a decision, teams need a record.

This is simple in theory. It is often missing in practice.

A brand should be able to answer:

- What did the AI recommend?

- What data did it use?

- What prompt or workflow triggered the output?

- Who approved the action?

- Was the output edited?

- When did the action happen?

- What changed after the action?

- Did the system follow policy?

Without logs, teams cannot investigate errors. They cannot prove compliance. They cannot improve the system. They cannot explain decisions to clients, leaders, platforms, or regulators.

IBM’s 2025 AI at the Core research found that nearly 74% of surveyed organizations had only moderate or limited coverage in AI risk and governance frameworks for technology, third-party, and model risks.

That gap becomes dangerous when AI decisions affect customers, spend, pricing, or regulated claims.

Audit trails make AI easier to trust because teams can inspect what happened.

What strong AI governance looks like for brands

AI governance does not need to start as a heavy policy document that nobody reads.

It can start with practical controls around the areas where AI creates the most business risk.

1. Use case classification

Every AI use case should be classified by risk.

Low-risk use cases may include summarizing internal notes, drafting first-version copy, or clustering public reviews.

Medium-risk use cases may include product content suggestions, campaign recommendations, or customer support drafts.

High-risk use cases may include pricing changes, compliance-heavy content, refund decisions, financial advice, health claims, legal material, or automated customer communication.

The level of review should match the risk.

2. Approved data sources

Teams need clarity on what data AI can use.

For example:

- Product content should come from approved catalog data.

- Pricing recommendations should use current margin and promo rules.

- Media recommendations should use spend, sales, stock, and profitability data.

- Customer support answers should use approved policy documents.

- AI search content should use verified product pages, FAQs, and schema.

Bad data rules create bad outputs.

3. Human review rules

Human oversight should be defined before teams use the system.

Brands can set rules such as:

- AI can draft product copy, but a human must approve claims.

- AI can recommend budget changes, but spend above a set amount needs approval.

- AI can answer common support queries, but refund disputes must be escalated.

- AI can suggest pricing changes, but margin-impacting changes need review.

- AI can summarize reviews, but product safety issues need manual checking.

This prevents confusion during live use.

4. Guardrails for content, media, and pricing

Guardrails are business rules inside the AI workflow.

For content, guardrails may include approved claims, tone rules, marketplace limits, banned words, and compliance checks.

For media, guardrails may include daily spend caps, ROAS thresholds, stock rules, and profitability checks.

For pricing, guardrails may include margin floors, promo calendars, competitor limits, and approval workflows.

The point is to make the AI operate inside business-approved limits.

5. Security and access control

AI systems should follow the same access discipline as other business systems.

Not every user should access every dataset. Not every AI workflow should connect to every tool.

A brand should define:

- Who can create AI workflows

- Who can approve outputs

- Which teams can access customer data

- Which tools can connect to APIs

- Which actions need admin approval

- Which external tools are blocked

- How sensitive data is masked

This reduces shadow AI and limits damage if something goes wrong.

6. Monitoring and reporting

AI governance needs ongoing monitoring.

Teams should track:

- Output accuracy

- Error rates

- Escalations

- Hallucination incidents

- Policy violations

- Manual override rates

- Budget anomalies

- Pricing exceptions

- Content rejection rates

- Customer complaints linked to AI outputs

These metrics show where the AI system is useful and where controls need work.

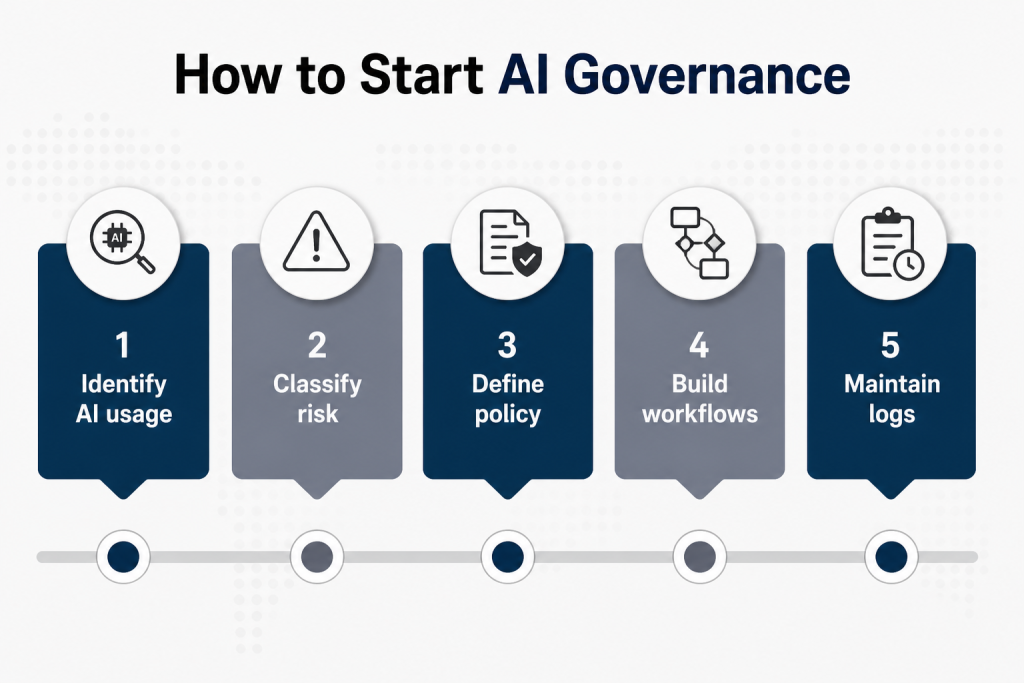

How brands can start with AI governance

A brand does not need to solve everything at once. Start with 5 practical steps.

Step 1: List where AI is already being used

Include official and unofficial use.

Check marketing, content, media, sales, customer support, analytics, ecommerce, finance, and operations.

Ask simple questions:

- Which AI tools are teams using?

- What data are they uploading?

- What outputs are reaching customers?

- Which workflows affect money, claims, or customer experience?

This gives the brand a clear starting point.

Step 2: Separate low-risk and high-risk use cases

A caption draft and a pricing change do not need the same controls.

Classify use cases by business impact.

Higher-risk use cases should get stronger review, better data controls, and detailed logs.

Step 3: Create an approved AI policy

The policy should be short and usable.

It should explain:

- Which tools are approved

- Which data cannot be uploaded

- Which tasks need review

- Which claims need approval

- Which teams own AI governance

- What to do when AI output seems wrong

A policy is useful only if teams can understand it.

Step 4: Build review workflows into daily work

Governance should happen inside the workflow, not after the damage is done.

If a product content team uses AI, review should be part of the content approval process.

If a media team uses AI, budget rules should sit inside campaign workflows.

If customer support uses AI, escalation rules should sit inside the support system.

Step 5: Keep logs

Every AI-assisted action should leave a record.

This record should show the input, output, approval, action, and result.

Logs help teams fix errors, prove accountability, and improve future outputs.

Where Paxcom fits in this conversation

Paxcom builds AI for brands operating across multiple marketplaces, under constant competitive pressure, with channel-specific constraints and margin targets that shift by quarter.

The products are designed with the assumption that accuracy and governance matter equally. A pricing tool with 94% recommendation accuracy but no margin floors is a liability. A media tool that can’t explain its budget allocation creates dependence, not capability.

The goal is AI that teams can trust, inspect, and override when the business situation calls for it. That’s not a conservative approach. It’s the only approach that works reliably at scale.

Final thoughts

AI accuracy is useful. Governance makes it usable. For brands, the risk is no longer limited to whether AI gives a wrong answer. The larger risk is whether that answer reaches the wrong place, triggers the wrong action, uses the wrong data, or goes live without review.

Modern brands need AI systems with controls: approved data, human review, guardrails, access rules, monitoring, and audit trails.

That is how brands can use AI with speed and still protect trust, compliance, and business performance.